Python Read Log File Line by Line Into Dictionary

Search engine crawl data institute within log files is a fantastic source of data for any SEO professional person.

By analyzing log files, y'all can proceeds an agreement of exactly how search engines are crawling and interpreting your website – clarity yous but cannot get when relying upon tertiary-political party tools.

This will allow you to:

- Validate your theories by providing indisputable evidence of how search engines are behaving.

- Prioritize your findings by helping you to understand the calibration of a problem and the likely impact of fixing it.

- Uncover additional problems that aren't visible when using other data sources.

Merely despite the multitude of benefits, log file data isn't used as frequently every bit it should be. The reasons are understandable:

- Accessing the data usually involves going through a dev team, which tin can have time.

- The raw files tin can be huge and provided in an unfriendly format, and so parsing the data takes effort.

- Tools designed to brand the process easier may need to be integrated before the data tin can exist piped to it, and the costs can exist prohibitive.

All of these issues are perfectly valid barriers to entry, but they don't have to exist insurmountable.

With a chip of basic coding knowledge, you lot can automate the unabridged procedure. That's exactly what we're going to exercise in this step-past-pace lesson on using Python to analyze server logs for SEO.

You'll discover a script to get you started, too.

Initial Considerations

One of the biggest challenges in parsing log file information is the sheer number of potential formats. Apache, Nginx, and IIS offer a range of unlike options and let users to customize the information points returned.

To complicate matters further, many websites at present use CDN providers like Cloudflare, Cloudfront, and Akamai to serve up content from the closest edge location to a user. Each of these has its ain formats, every bit well.

We'll focus on the Combined Log Format for this post, equally this is the default for Nginx and a common choice on Apache servers.

If you're unsure what type of format you lot're dealing with, services similar Builtwith and Wappalyzer both provide excellent data about a website's tech stack. They can help you determine this if you don't have direct access to a technical stakeholder.

Still none the wiser? Endeavour opening ane of the raw files.

Ofttimes, comments are provided with data on the specific fields, which can then be cross-referenced.

#Fields: time c-ip cs-method cs-uri-stem sc-status cs-version 17:42:15 172.16.255.255 GET /default.htm 200 HTTP/1.0

Another consideration is what search engines we want to include, every bit this volition need to be factored into our initial filtering and validation.

To simplify things, we'll focus on Google, given its dominant 88% Us market place share.

Allow's become started.

1. Identify Files and Determining Formats

To perform meaningful SEO analysis, we desire a minimum of ~100k requests and two-4 weeks' worth of information for the average site.

Due to the file sizes involved, logs are normally split into individual days. It's almost guaranteed that yous'll receive multiple files to process.

As nosotros don't know how many files we'll be dealing with unless we combine them earlier running the script, an of import first step is to generate a list of all of the files in our binder using the glob module.

This allows us to render whatsoever file matching a blueprint that we specify. As an example, the following lawmaking would match whatever TXT file.

import glob files = glob.glob('*.txt') Log files can exist provided in a diverseness of file formats, still, not just TXT.

In fact, at times the file extension may not exist one you recognize. Here's a raw log file from Akamai'south Log Commitment Service, which illustrates this perfectly:

bot_log_100011.esw3c_waf_S.202160250000-2000-41

Additionally, it's possible that the files received are split beyond multiple subfolders, and we don't want to waste time copying these into a singular location.

Thankfully, glob supports both recursive searches and wildcard operators. This means that nosotros can generate a list of all the files within a subfolder or child subfolders.

files = glob.glob('**/*.*', recursive=True) Next, we want to identify what types of files are inside our list. To do this, the MIME type of the specific file can exist detected. This will tell united states exactly what type of file we're dealing with, regardless of the extension.

This tin can be achieved using python-magic, a wrapper effectually the libmagic C library, and creating a simple office.

pip install python-magic pip install libmagic

import magic def file_type(file_path): mime = magic.from_file(file_path, mime=True) return mime

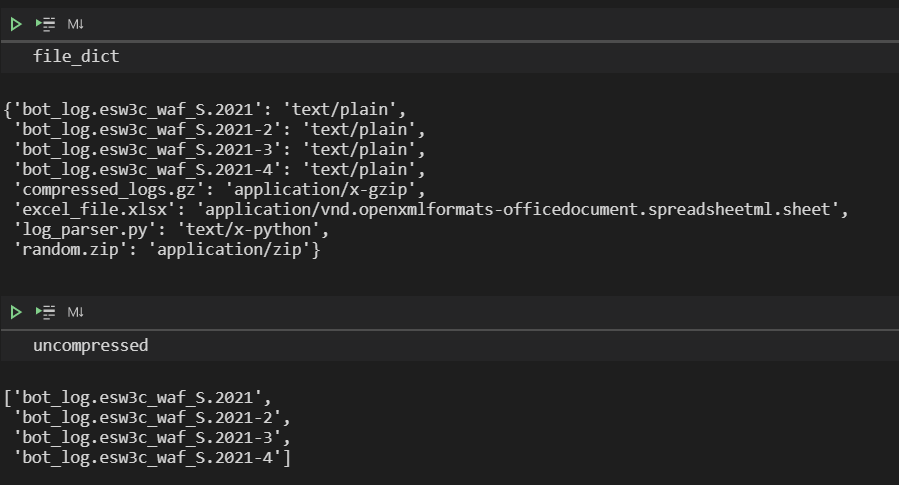

List comprehension can then exist used to loop through our files and utilize the office, creating a dictionary to store both the names and types.

file_types = [file_type(file) for file in files] file_dict = dict(nil(files, file_types))

Finally, a function and a while loop to extract a list of files that return a MIME type of text/patently and exclude annihilation else.

uncompressed = [] def file_identifier(file): for central, value in file_dict.items(): if file in value: uncompressed.suspend(central) while file_identifier('text/plain'): file_identifier('text/plain') in file_dict

2. Excerpt Search Engine Requests

Later filtering downwardly the files in our folder(s), the side by side stride is to filter the files themselves by simply extracting the requests that nosotros care virtually.

This removes the need to combine the files using command-line utilities like GREP or FINDSTR, saving an inevitable 5-10 minute search through open notebook tabs and bookmarks to notice the correct command.

In this instance, as we only want Googlebot requests, searching for 'Googlebot' volition lucifer all of the relevant user agents.

Nosotros tin can utilize Python's open function to read and write our file and Python'south regex module, RE, to perform the search.

import re pattern = 'Googlebot' new_file = open('./googlebot.txt', 'west', encoding='utf8') for txt_files in uncompressed: with open up(txt_files, 'r', encoding='utf8') every bit text_file: for line in text_file: if re.search(blueprint, line): new_file.write(line) Regex makes this hands scalable using an OR operator.

pattern = 'Googlebot|bingbot'

iii. Parse Requests

In a previous postal service, Village Batista provided guidance on how to use regex to parse requests.

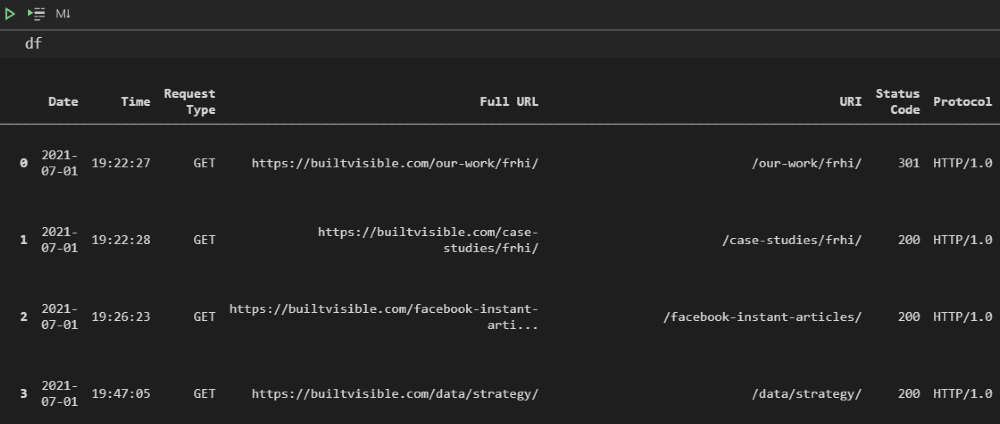

As an culling arroyo, we'll exist using Pandas' powerful inbuilt CSV parser and some basic data processing functions to:

- Drop unnecessary columns.

- Format the timestamp.

- Create a column with full URLs.

- Rename and reorder the remaining columns.

Rather than hardcoding a domain name, the input function can be used to prompt the user and save this as a variable.

whole_url = input('Please enter full domain with protocol: ') # get domain from user input df = pd.read_csv('./googlebot.txt', sep='\south+', error_bad_lines=False, header=None, low_memory=False) # import logs df.drop([one, 2, 4], centrality=one, inplace=True) # drop unwanted columns/characters df[3] = df[3].str.replace('[', '') # split fourth dimension stamp into two df[['Appointment', 'Fourth dimension']] = df[3].str.split(':', 1, expand=Truthful) df[['Request Type', 'URI', 'Protocol']] = df[v].str.split(' ', two, aggrandize=True) # separate uri asking into columns df.drop([three, five], centrality=1, inplace=True) df.rename(columns = {0:'IP', half dozen:'Status Code', 7:'Bytes', 8:'Referrer URL', nine:'User Amanuensis'}, inplace=True) #rename columns df['Full URL'] = whole_url + df['URI'] # concatenate domain name df['Date'] = pd.to_datetime(df['Date']) # declare information types df[['Condition Code', 'Bytes']] = df[['Status Code', 'Bytes']].apply(pd.to_numeric) df = df[['Date', 'Time', 'Request Type', 'Full URL', 'URI', 'Status Code', 'Protocol', 'Referrer URL', 'Bytes', 'User Amanuensis', 'IP']] # reorder columns

4. Validate Requests

It'due south incredibly piece of cake to spoof search engine user agents, making request validation a vital part of the process, lest we finish up drawing false conclusions past analyzing our own third-political party crawls.

To do this, we're going to install a library called dnspython and perform a reverse DNS.

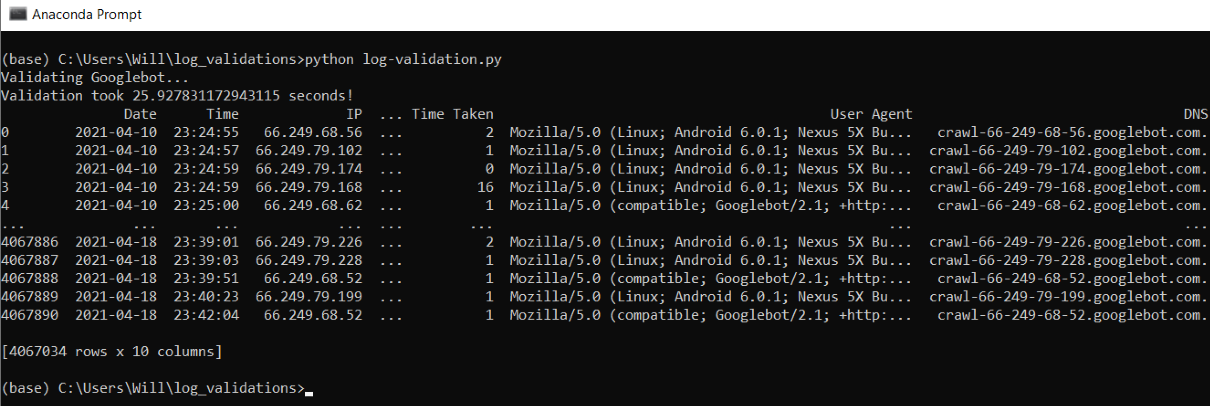

Pandas can be used to drop duplicate IPs and run the lookups on this smaller DataFrame, before reapplying the results and filtering out any invalid requests.

from dns import resolver, reversename def reverseDns(ip): attempt: render str(resolver.query(reversename.from_address(ip), 'PTR')[0]) except: return 'Due north/A' logs_filtered = df.drop_duplicates(['IP']).copy() # create DF with dupliate ips filtered for check logs_filtered['DNS'] = logs_filtered['IP'].apply(reverseDns) # create DNS column with the reverse IP DNS result logs_filtered = df.merge(logs_filtered[['IP', 'DNS']], how='left', on=['IP']) # merge DNS column to total logs matching IP logs_filtered = logs_filtered[logs_filtered['DNS'].str.contains('googlebot.com')] # filter to verified Googlebot logs_filtered.drib(['IP', 'DNS'], axis=i, inplace=True) # driblet dns/ip columns Taking this approach will drastically speed upward the lookups, validating millions of requests in minutes.

In the example beneath, ~4 million rows of requests were processed in 26 seconds.

v. Pin the Data

Subsequently validation, we're left with a cleansed, well-formatted data set and can begin pivoting this information to more easily analyze data points of interest.

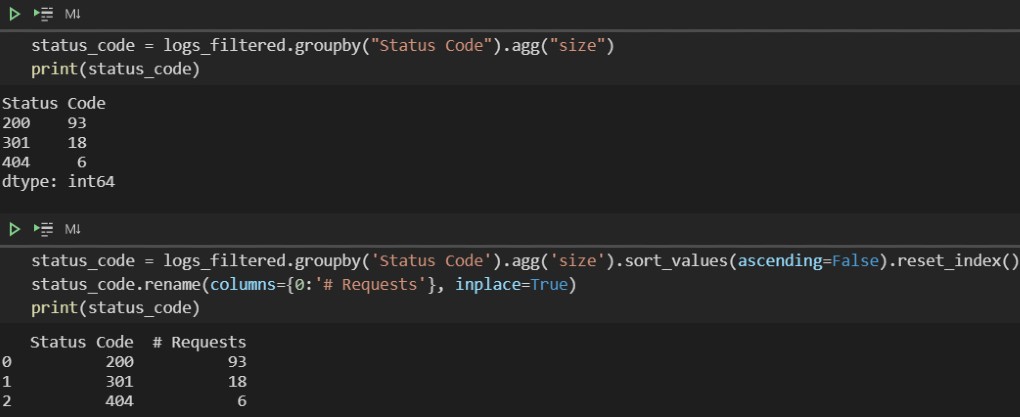

Offset off, let's begin with some simple aggregation using Pandas' groupby and agg functions to perform a count of the number of requests for different status codes.

status_code = logs_filtered.groupby('Status Code').agg('size') To replicate the type of count you are used to in Excel, it's worth noting that nosotros demand to specify an aggregate role of 'size', not 'count'.

Using count will invoke the part on all columns within the DataFrame, and nada values are handled differently.

Resetting the index volition restore the headers for both columns, and the latter column can be renamed to something more meaningful.

status_code = logs_filtered.groupby('Status Code').agg('size').sort_values(ascending=False).reset_index() status_code.rename(columns={0:'# Requests'}, inplace=True)

For more advanced data manipulation, Pandas' inbuilt pivot tables offer functionality comparable to Excel, making complex aggregations possible with a singular line of code.

At its most basic level, the function requires a specified DataFrame and alphabetize – or indexes if a multi-alphabetize is required – and returns the respective values.

pd.pivot_table(logs_filtered, index['Full URL'])

For greater specificity, the required values tin be declared and aggregations – sum, mean, etc – applied using the aggfunc parameter.

Too worth mentioning is the columns parameter, which allows u.s.a. to display values horizontally for clearer output.

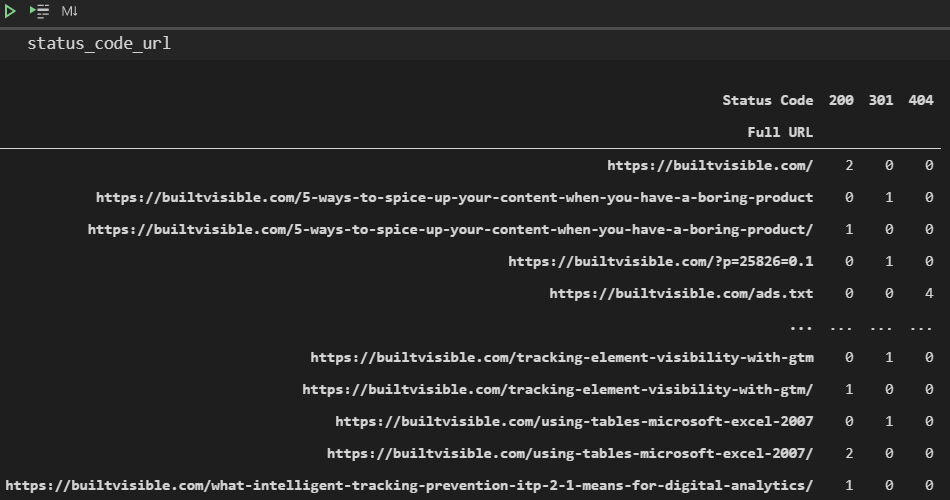

status_code_url = pd.pivot_table(logs_filtered, alphabetize=['Full URL'], columns=['Status Code'], aggfunc='size', fill_value=0)

Here'southward a slightly more complex example, which provides a count of the unique URLs crawled per user amanuensis per day, rather than just a count of the number of requests.

user_agent_url = pd.pivot_table(logs_filtered, index=['User Agent'], values=['Full URL'], columns=['Date'], aggfunc=pd.Series.nunique, fill_value=0)

If you're yet struggling with the syntax, check out Mito. Information technology allows you to interact with a visual interface within Jupyter when using JupyterLab, but still outputs the relevant code.

Incorporating Ranges

For information points like bytes which are likely to have many different numerical values, information technology makes sense to bucket the data.

To practice so, nosotros tin can define our intervals within a list and then use the cut function to sort the values into bins, specifying np.inf to catch annihilation above the maximum value declared.

byte_range = [0, 50000, 100000, 200000, 500000, one thousand thousand, np.inf] bytes_grouped_ranges = (logs_filtered.groupby(pd.cut(logs_filtered['Bytes'], bins=byte_range, precision=0)) .agg('size') .reset_index() ) bytes_grouped_ranges.rename(columns={0: '# Requests'}, inplace=True) Interval notation is used within the output to define exact ranges, e.m.

(50000 100000]

The round brackets bespeak when a number is not included and a square bracket when it is included. So, in the above example, the saucepan contains data points with a value of between 50,001 and 100,000.

6. Export

The final step in our procedure is to export our log data and pivots.

For ease of analysis, information technology makes sense to export this to an Excel file (XLSX) rather than a CSV. XLSX files support multiple sheets, which ways that all the DataFrames tin be combined in the same file.

This can be achieved using to excel. In this example, an ExcelWriter object likewise needs to be specified because more than one sail is being added into the same workbook.

writer = pd.ExcelWriter('logs_export.xlsx', engine='xlsxwriter', datetime_format='dd/mm/yyyy', options={'strings_to_urls': Imitation}) logs_filtered.to_excel(author, sheet_name='Principal', alphabetize=Simulated) pivot1.to_excel(writer, sheet_name='My pivot') writer.salve() When exporting a big number of pivots, it helps to simplify things by storing DataFrames and sheet names in a dictionary and using a for loop.

sheet_names = { 'Request Status Codes Per Mean solar day': status_code_date, 'URL Status Codes': status_code_url, 'User Amanuensis Requests Per Day': user_agent_date, 'User Agent Requests Unique URLs': user_agent_url, } for sheet, name in sheet_names.items(): name.to_excel(writer, sheet_name=sheet) One concluding complication is that Excel has a row limit of 1,048,576. We're exporting every asking, and then this could crusade issues when dealing with large samples.

Considering CSV files have no limit, an if statement can exist employed to add together in a CSV export every bit a fallback.

If the length of the log file DataFrame is greater than 1,048,576, this will instead be exported as a CSV, preventing the script from failing while notwithstanding combining the pivots into a singular consign.

if len(logs_filtered) <= 1048576: logs_filtered.to_excel(writer, sheet_name='Chief', index=Fake) else: logs_filtered.to_csv('./logs_export.csv', alphabetize=Simulated) Final Thoughts

The additional insights that tin can be gleaned from log file data are well worth investing some time in.

If you've been avoiding leveraging this information due to the complexities involved, my hope is that this postal service will convince you that these can exist overcome.

For those with access to tools already who are interested in coding, I hope breaking downwards this process cease-to-cease has given you a greater understanding of the considerations involved when creating a larger script to automate repetitive, time-consuming tasks.

The full script I created can exist found here on Github.

This includes additional extras such as GSC API integration, more pivots, and support for two more log formats: Amazon ELB and W3C (used by IIS).

To add in another format, include the name within the log_fomats list on line 17 and add an additional elif argument on line 193 (or edit one of the existing ones).

There is, of course, massive scope to expand this further. Stay tuned for a part two post that will encompass the incorporation of data from third-political party crawlers, more advanced pivots, and information visualization.

More Resources:

- How to Use Python to Analyze SEO Data: A Reference Guide

- 8 Python Libraries for SEO & How To Use Them

- Avant-garde Technical SEO: A Complete Guide

Prototype Credits

All screenshots taken by author, July 2021

Source: https://www.searchenginejournal.com/python-analysis-server-log-files/412898/

0 Response to "Python Read Log File Line by Line Into Dictionary"

Post a Comment